Giving Your AI a Personality with Custom Voice Skills

Why Custom Voice Skill Creation Is the Next Step for Smart Home Automation

Custom voice skill creation is the process of building a personalized, voice-activated application that responds to specific spoken commands — going far beyond what built-in voice assistant features can do out of the box.

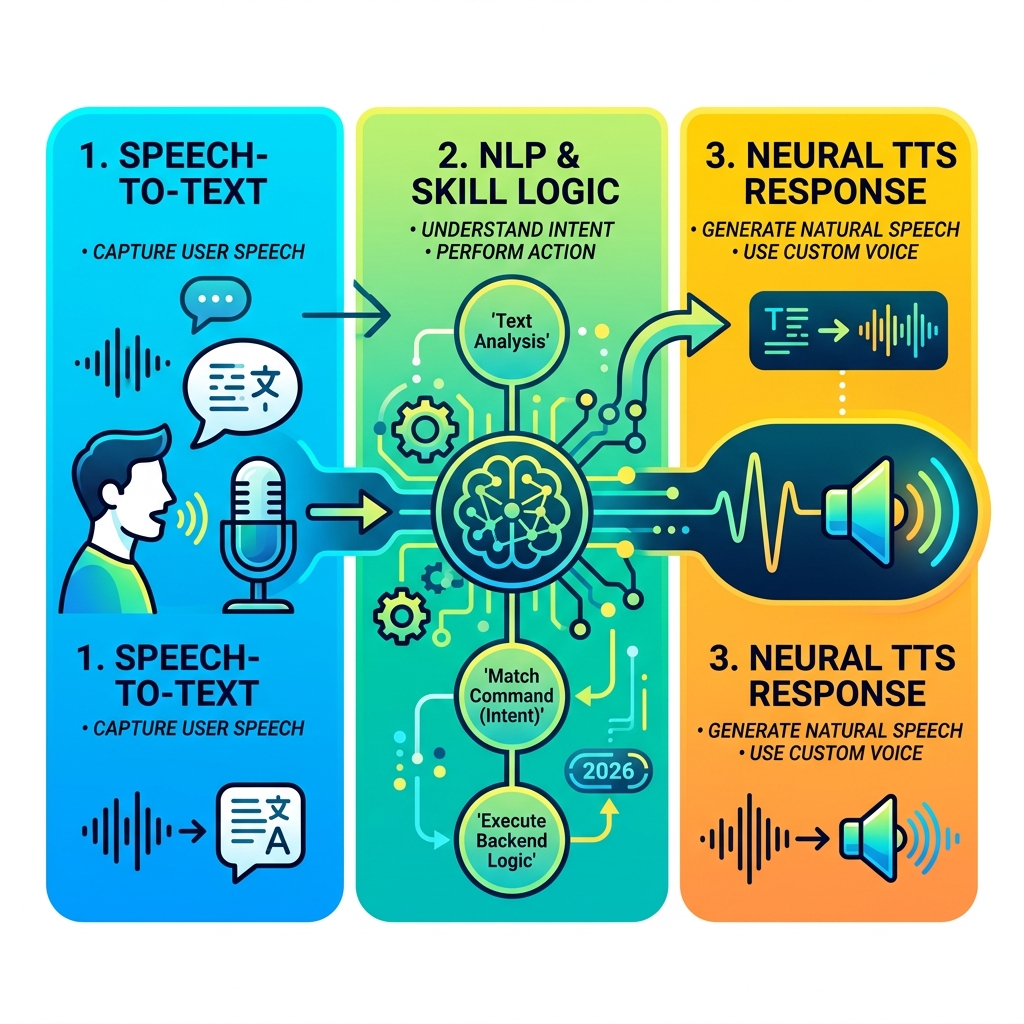

Here’s a quick overview of how it works:

- Design your voice interaction model (what users say and what it means)

- Build the backend logic that processes requests and returns responses

- Train a custom neural voice with recorded speech samples (at least 300 utterances)

- Deploy the skill to a voice platform like Amazon Alexa or a custom endpoint

- Test and certify before publishing to users

If you’ve ever felt like your smart home assistant just doesn’t get you — defaulting to generic answers, missing context, or sounding robotic — you’re not alone.

Standard voice assistant features are built for everyone, which means they’re perfectly optimized for no one in particular. They can tell you the weather and set a timer. But they can’t manage your specific devices, follow your unique routines, or speak in a voice that actually fits your brand or home setup.

That’s exactly the gap that custom voice skills are designed to fill.

Think of a custom voice skill like an app — but instead of tapping a screen, you’re talking. Platforms like Amazon Alexa describe skills as exactly that: “like apps for Alexa.” They let you define what users say, what happens when they say it, and even how the response sounds.

For busy people managing multiple smart devices, this isn’t just a nice-to-have. It’s the difference between a fragmented, frustrating setup and a system that genuinely works the way you think.

Understanding Custom Voice Skill Creation vs. Standard Features

When we talk about custom voice skill creation, we are stepping out of the “one-size-fits-all” world. Standard features are the pre-baked commands your device comes with—like asking for the time or a joke. A custom skill, however, is a bespoke piece of software. It allows us to define a unique “Interaction Model,” which is essentially the map of how a human conversation translates into digital actions.

The difference lies in ownership and flexibility. With standard features, you are a guest in the assistant’s world. With a custom skill, you are the architect. You define the Skill Interface (the part that hears and understands) and the Skill Service (the backend logic that decides what to do). This is particularly useful if you are looking for beginner-friendly voice assistant tips to make your home feel more personal.

According to the Steps to Build a Custom Skill | Alexa Skills Kit, building a skill involves mapping “utterances” (what people say) to “intents” (what people want). By creating these yourself, you ensure your smart home responds to your specific brand of logic.

Why Businesses Prioritize Custom Voice Skill Creation

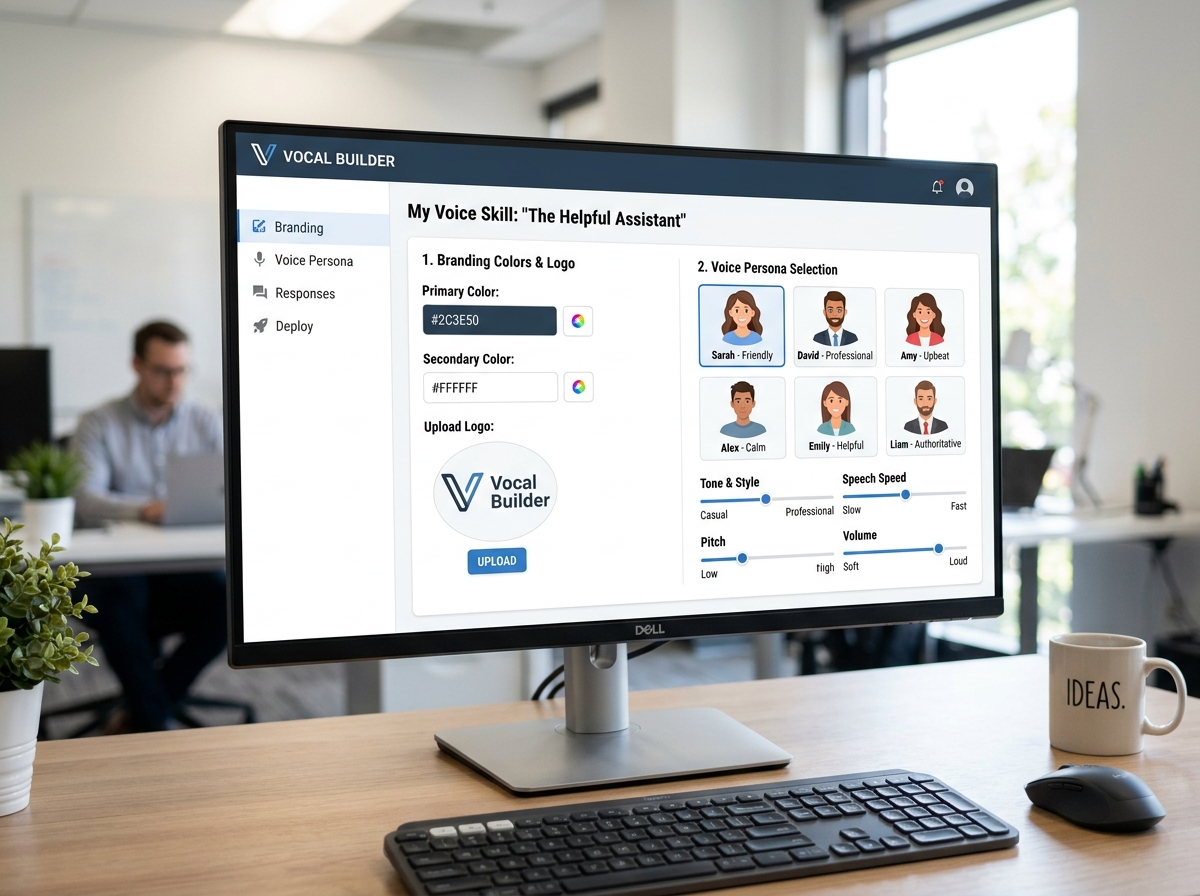

In the business world, a voice is more than just audio; it’s a brand identity. Companies use custom voice skill creation to personify their machines, turning a cold interface into a helpful brand ambassador. Imagine a hotel where the “concierge” in every room has a consistent, warm, and professional voice that matches the hotel’s luxury branding.

This provides a massive competitive advantage. Instead of sounding like every other generic smart speaker, a business can offer a unique customer engagement experience. It’s about building a “persona brief”—a document that outlines the voice’s age, tone, and mannerisms—to ensure the AI feels like a real member of the team. If you’re just starting out, checking out first steps to mastering smart home assistants can help you see how these professional concepts apply to your personal automation.

Core Components of Voice Architecture

To build a truly natural voice, we have to look under the hood. Modern custom voice skill creation utilizes Neural Text-to-Speech (TTS) technology. This architecture generally consists of three major components:

- Text Analyzer: This breaks down sentences into a phoneme sequence. Phonemes are the basic units of sound that distinguish words in a language.

- Neural Acoustic Model: This takes those phonemes and predicts the acoustic features—the “texture” and “rhythm” of the speech.

- Neural Vocoder: This final stage converts those acoustic features into actual audible waves that we hear through the speaker.

By mastering these components, as detailed in the Custom voice overview – Speech service – Foundry Tools | Microsoft Learn, we can create voices that don’t just speak, but speak with emotion and nuance.

The Technical Process of Custom Voice Skill Creation

Ready to get your hands dirty? The technical side of custom voice skill creation is where the magic happens. Most developers use tools like AWS Lambda to host their skill’s code. Lambda is “serverless,” meaning you don’t have to manage a computer; you just upload your code (usually in Node.js or Python) and it runs whenever someone triggers your skill.

The process begins by choosing an Invocation Name. This is the “magic word” that tells the assistant to open your specific skill (e.g., “Alexa, open FinMoneyHub”). From there, you build the interaction model. For those looking for a more fluid experience, the Steps to Create a Skill with Alexa Conversations | Alexa Skills Kit explains how to use AI and machine learning to create multi-turn dialogs that feel like a real conversation rather than a series of rigid commands. If you’re using an Amazon device, follow our guide on easy setup for Alexa at home to ensure your environment is ready for testing.

Enhancing Output with SSML in Custom Voice Skill Creation

Once your skill can “think,” you need to make sure it can “speak” beautifully. This is where Speech Synthesis Markup Language (SSML) comes in. Think of SSML like HTML for the voice. It allows us to use tags to adjust how the AI sounds in real-time.

Some common SSML uses include:

- Pitch Adjustment: Making the voice higher or lower to convey excitement or seriousness.

- Speaking Rate: Speeding up for a quick update or slowing down for instructions.

- Intonation: Adding emphasis to specific words.

- Voice Tags: Switching between different named voices (like “Kendra” or “Brian”) within the same skill to represent different characters.

Using SSML is one of the best smart speaker setup for beginners tips for those who want their assistant to sound less like a computer and more like a companion.

Designing Intents and Slots for Natural Interaction

A great custom skill understands that humans don’t all speak the same way. When we design intents (the goal of the user), we provide sample utterances—different ways a user might phrase a request.

For example, if the intent is GetAccountBalance, utterances might include:

- “How much money do I have?”

- “What’s my balance?”

- “Check my account.”

We also use slots, which act as variables. If you ask for a “pizza ordering skill,” the size of the pizza is a slot. By using beginner-friendly assistant automation tips, you can set up “slot validation” to ensure the assistant asks follow-up questions if the user forgets to mention a detail, like the crust type.

Quality Control and Responsible AI Guidelines

Creating a voice isn’t just about code; it’s about ethics and quality. To create a high-quality “Neural Voice,” you need a solid foundation of data.

| Requirement | Standard Voice | Custom Neural Voice |

|---|---|---|

| Utterance Count | Low (Generic) | Min. 300 utterances |

| Recording Environment | Home/Office | Professional Studio |

| Voice Talent | Synthetic/Stock | Professional Talent with Consent |

| Consistency | Variable | Strict Pitch/Rate/Tone |

As we see in the best smart home assistants 2026 projections, Responsible AI is becoming a legal and ethical requirement. This includes:

- Voice Talent Consent: You must have a recorded statement from the voice actor consenting to their voice being used for synthetic recreation.

- Transparency: Users should generally be aware they are talking to a synthetic voice.

- Data Privacy: Ensuring the recorded samples are stored securely and used only for the intended model training.

Testing, Deployment, and Monetization

Before you unleash your skill on the world, you have to put it through its paces. Developers use the Alexa Simulator or the Speech Studio testing environment to see how the skill handles various inputs. This is the “beta testing” phase where you find out if your code breaks when someone says something unexpected.

Once tested, the skill goes through a Certification Process. The platform provider (like Amazon) reviews the skill to ensure it meets security, privacy, and functional standards.

But how do you make it pay off? There are several ways to monetize:

- In-Skill Purchasing (ISP): Selling premium features, like extra “lives” in a game or advanced financial reports.

- Subscriptions: Charging a monthly fee for ongoing access to premium content.

- Amazon Associates: Earning a commission by helping users buy physical products through your skill.

For those connecting multiple smart assistants, monetization often comes from the added value and efficiency the skill provides to a broader ecosystem.

Frequently Asked Questions about Custom Voice Skills

What is the difference between a custom skill and a standard feature?

Standard features are pre-built by the platform provider, while custom skills allow developers to define unique interaction models, brand-specific voices, and specialized logic for niche tasks. Standard features are like the apps that come pre-installed on your phone, while custom skills are the apps you download (or build!) to make the device do exactly what you want.

How many voice samples are needed for a custom neural voice?

To create a high-quality custom neural voice, you typically need to provide at least 300 recorded utterances from a professional voice talent to train the model effectively. These samples should be recorded in a professional studio to ensure a high signal-to-noise ratio and consistent tone, pitch, and speaking rate.

Can I monetize my custom voice skill?

Yes, developers can monetize skills through in-skill purchasing (ISP), subscriptions, or by using the Amazon Associates program to recommend physical products within the voice experience. You can also offer “real-world” services, such as food delivery or car service bookings, and process those transactions through your skill.

Conclusion

At FinMoneyHub, we believe that technology should serve you, not the other way around. Custom voice skill creation is the ultimate tool for reclaiming control over your digital life. By moving away from generic, “out-of-the-box” features and building your own voice experiences, you unlock easy, complex command capabilities that truly reflect your needs.

Whether you’re looking to optimize your smart tech routines or simplify your financial management through voice, the power to build a smarter, more personal assistant is in your hands. Don’t settle for a robotic helper when you can give your AI a personality that fits your home and your life.

For more insights on optimizing your digital devices, check out More info about smart assistant services.